BinderDeath

原理总结

UnlinkToDeath流程类似,参考上文,不做记录

死亡通知是为了让Bp端(客户端进程)进能知晓Bn端(服务端进程)的生死情况,当Bn端进程死亡后能通知到Bp端。

- 定义:AppDeathRecipient是继承IBinder::DeathRecipient类,主要需要实现其binderDied()来进行死亡通告。

- 注册:binder->linkToDeath(AppDeathRecipient)是为了将AppDeathRecipient死亡通知注册到Binder上。

Bp端只需要覆写binderDied()方法,实现一些后尾清除类的工作,则在Bn端死掉后,会回调binderDied()进行相应处理。

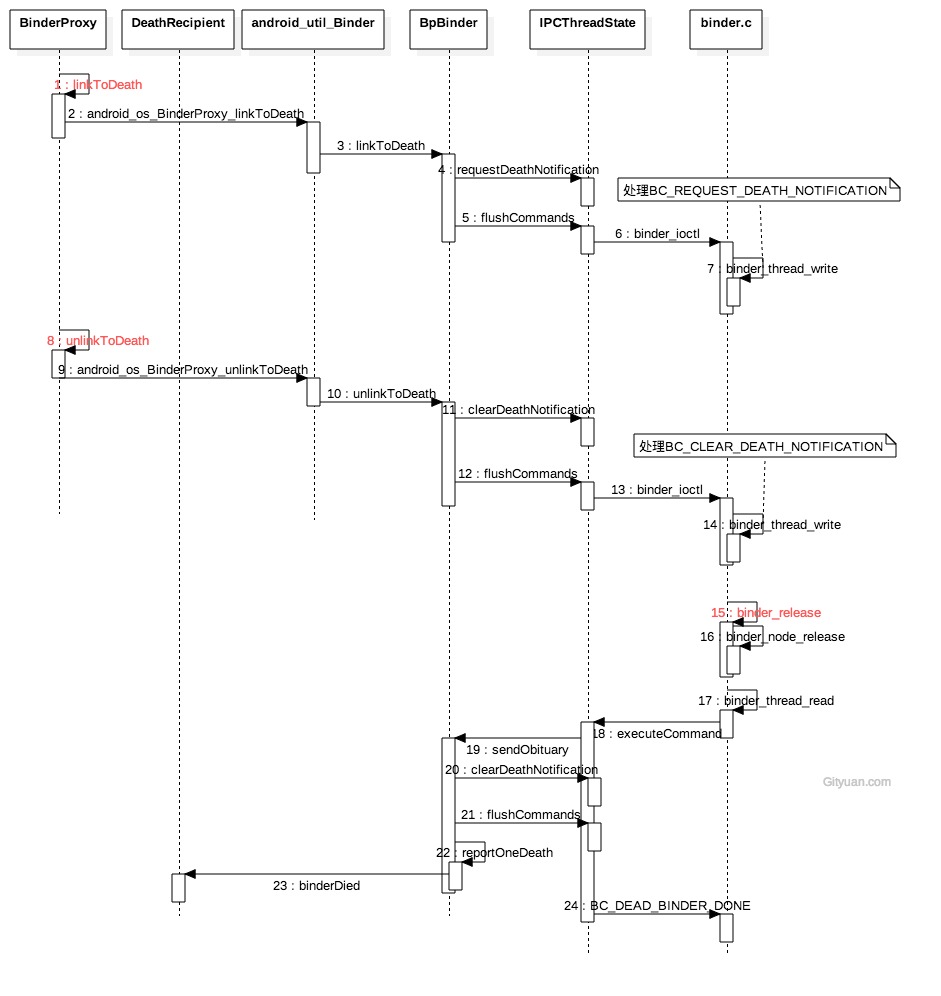

linkToDeath

android_os_BinderProxy_linkToDeath

static void android_os_BinderProxy_linkToDeath(JNIEnv* env, jobject obj,

jobject recipient, jint flags)

{

//获取BinderProxy.mObject成员变量值, 即BpBinder对象

IBinder* target = (IBinder*)env->GetLongField(obj, gBinderProxyOffsets.mObject);

sp<JavaDeathRecipient> jdr = new JavaDeathRecipient(env, recipient, list);

//建立死亡通知[见小节2.2]

status_t err = target->linkToDeath(jdr, NULL, flags);

}

- 获取DeathRecipientList: 其成员变量mList记录该BinderProxy的JavaDeathRecipient列表信息;

- 一个BpBinder可以注册多个死亡回调

- 创建JavaDeathRecipient: 继承于IBinder::DeathRecipient

linkToDeath

status_t BpBinder::linkToDeath(

const sp<DeathRecipient>& recipient, void* cookie, uint32_t flags)

{

IPCThreadState* self = IPCThreadState::self();

self->requestDeathNotification(mHandle, this);

self->flushCommands();

}

requestDeathNotification

status_t IPCThreadState::requestDeathNotification(int32_t handle, BpBinder* proxy)

{

mOut.writeInt32(BC_REQUEST_DEATH_NOTIFICATION);

mOut.writeInt32((int32_t)handle);

mOut.writePointer((uintptr_t)proxy);

return NO_ERROR;

}

flushCommands

void IPCThreadState::flushCommands()

{

if (mProcess->mDriverFD <= 0)

return;

talkWithDriver(false);

}

binder_ioctl_write_read

static int binder_ioctl_write_read(struct file *filp,

unsigned int cmd, unsigned long arg,

struct binder_thread *thread)

{

int ret = 0;

struct binder_proc *proc = filp->private_data;

void __user *ubuf = (void __user *)arg;

struct binder_write_read bwr;

if (copy_from_user(&bwr, ubuf, sizeof(bwr))) { //把用户空间数据ubuf拷贝到bwr

ret = -EFAULT;

goto out;

}

if (bwr.write_size > 0) { //此时写缓存有数据【见小节3.2】

ret = binder_thread_write(proc, thread,

bwr.write_buffer, bwr.write_size, &bwr.write_consumed);

...

}

if (bwr.read_size > 0) { //此时读缓存没有数据

...

}

if (copy_to_user(ubuf, &bwr, sizeof(bwr))) { //将内核数据bwr拷贝到用户空间ubuf

ret = -EFAULT;

goto out;

}

out:

return ret;

}

binder_thread_write

static int binder_thread_write(struct binder_proc *proc,

struct binder_thread *thread,

binder_uintptr_t binder_buffer, size_t size,

binder_size_t *consumed)

{

uint32_t cmd;

//proc, thread都是指当前发起端进程的信息

while (ptr < end && thread->return_error == BR_OK) {

get_user(cmd, (uint32_t __user *)ptr); //获取BC_REQUEST_DEATH_NOTIFICATION

ptr += sizeof(uint32_t);

switch (cmd) {

case BC_REQUEST_DEATH_NOTIFICATION:{ //注册死亡通知

uint32_t target;

void __user *cookie;

struct binder_ref *ref;

struct binder_ref_death *death;

get_user(target, (uint32_t __user *)ptr); //获取target

ptr += sizeof(uint32_t);

get_user(cookie, (void __user * __user *)ptr); //获取BpBinder

ptr += sizeof(void *);

ref = binder_get_ref(proc, target); //拿到目标服务的binder_ref

if (cmd == BC_REQUEST_DEATH_NOTIFICATION) {

//native Bp可注册多个,但Kernel只允许注册一个死亡通知

if (ref->death) {

break;

}

death = kzalloc(sizeof(*death), GFP_KERNEL);

INIT_LIST_HEAD(&death->work.entry);

death->cookie = cookie; //BpBinder指针

ref->death = death;//将death注册到binder_ref中,main

//当目标binder服务所在进程已死,则直接发送死亡通知。这是非常规情况

if (ref->node->proc == NULL) {

ref->death->work.type = BINDER_WORK_DEAD_BINDER;

//当前线程为binder线程,则直接添加到当前线程的todo队列.

if (thread->looper & (BINDER_LOOPER_STATE_REGISTERED | BINDER_LOOPER_STATE_ENTERED)) {

list_add_tail(&ref->death->work.entry, &thread->todo);

} else {

list_add_tail(&ref->death->work.entry, &proc->todo);

wake_up_interruptible(&proc->wait);

}

}

} else {

...

}

在处理BC_REQUEST_DEATH_NOTIFICATION过程,正好遇到对端目标binder服务所在进程已死的情况, 向todo队列增加BINDER_WORK_DEAD_BINDER事务,直接发送死亡通知,但这属于非常规情况。

更常见的场景是binder服务所在进程死亡后,会调用binder_release方法, 然后调用binder_node_release.这个过程便会发出死亡通知的回调.

触发死亡通知

当Binder服务所在进程死亡后,会释放进程相关的资源,Binder也是一种资源。 binder_open打开binder驱动/dev/binder,这是字符设备,获取文件描述符。在进程结束的时候会有一个关闭文件系统的过程中会调用驱动close方法,该方法相对应的是release()方法。当binder的fd被释放后,此处调用相应的方法是binder_release().

但并不是每个close系统调用都会触发调用release()方法. 只有真正释放设备数据结构才调用release(),内核维持一个文件结构被使用多少次的计数,即便是应用程序没有明显地关闭它打开的文件也适用: 内核在进程exit()时会释放所有内存和关闭相应的文件资源, 通过使用close系统调用最终也会release binder.

触发和处理死亡通知图解

graph TB

subgraph binder_init时,调用create_singlethread_workqueue,创建了名叫binder的workqueue,是kernel提供的一种实现简单而有效的内核线程机制,可延迟执行任务

queue_work

end

subgraph 释放binder_thread,binder_node,binder_ref,binder_work,binder_buf. __free_page

binder_deferred_release

end

subgraph 遍历该binder_node所有binder_ref,存在binder死亡通知则向binder_ref所在进程的todo队列添加BINDER_WORK_DEAD_BINDER事务并唤醒处于proc->wait的binder线程,执行binder_thread_read

binder_node_release

end

subgraph 从thread或process的todo队列拿出前面放入的binder_work,此时type为BINDER_WORK_DEAD_BINDER,写入death->cookie也就是BpBinder,将命令BR_DEAD_BINDER写到用户空间

binder_thread_read

end

subgraph mObituaries的每个元素调用其recipient->binderDied

BpBinder::sendObituary

end

binder_fops-->binder_relase(".release = binder_release")-->|向工作队列添加binder_deferred_work|queue_work("queue_work(binder_deferred_workqueue, &binder_deferred_work);")

-->binder_deferred_func-->binder_deferred_release-->binder_node_release-->binder_thread_read-->IPCThreadState::executeCommand-->|case BR_DEAD_BINDER:mIn.readPointer得到BpBinder*|BpBinder::sendObituary

[-> binder.c]

binder_fops

static const struct file_operations binder_fops = {

.owner = THIS_MODULE,

.poll = binder_poll,

.unlocked_ioctl = binder_ioctl,

.compat_ioctl = binder_ioctl,

.mmap = binder_mmap,

.open = binder_open,

.flush = binder_flush,

.release = binder_release, //对应于release的方法

};

binder_release

static int binder_release(struct inode *nodp, struct file *filp)

{

struct binder_proc *proc = filp->private_data;

debugfs_remove(proc->debugfs_entry);

binder_defer_work(proc, BINDER_DEFERRED_RELEASE);

return 0;

}

binder_defer_work

static void binder_defer_work(struct binder_proc *proc, enum binder_deferred_state defer) {

mutex_lock(&binder_deferred_lock); //获取锁

//添加BINDER_DEFERRED_RELEASE

proc->deferred_work |= defer;

if (hlist_unhashed(&proc->deferred_work_node)) {

hlist_add_head(&proc->deferred_work_node, &binder_deferred_list);

//向工作队列添加binder_deferred_work [见小节4.4]

queue_work(binder_deferred_workqueue, &binder_deferred_work);

}

mutex_unlock(&binder_deferred_lock); //释放锁

}

queue_work

//全局工作队列

static struct workqueue_struct *binder_deferred_workqueue;

static int __init binder_init(void)

{

int ret;

//创建了名叫“binder”的工作队列

binder_deferred_workqueue = create_singlethread_workqueue("binder");

if (!binder_deferred_workqueue)

return -ENOMEM;

...

}

device_initcall(binder_init);

关于binder_deferred_work的定义:

static DECLARE_WORK(binder_deferred_work, binder_deferred_func);

在Binder设备驱动初始化的过程执行binder_init()方法中,调用 create_singlethread_workqueue(“binder”),创建了名叫“binder”的工作队列(workqueue)。 workqueue是kernel提供的一种实现简单而有效的内核线程机制,可延迟执行任务。

此处binder_deferred_work的func为binder_deferred_func,接下来看该方法。

binder_deferred_func

static void binder_deferred_func(struct work_struct *work)

{

struct binder_proc *proc;

struct files_struct *files;

int defer;

do {

mutex_lock(&binder_main_lock); //获取binder_main_lock

mutex_lock(&binder_deferred_lock);

preempt_disable(); //禁止CPU抢占

if (!hlist_empty(&binder_deferred_list)) {

proc = hlist_entry(binder_deferred_list.first,

struct binder_proc, deferred_work_node);

hlist_del_init(&proc->deferred_work_node);

defer = proc->deferred_work;

proc->deferred_work = 0;

} else {

proc = NULL;

defer = 0;

}

mutex_unlock(&binder_deferred_lock);

files = NULL;

if (defer & BINDER_DEFERRED_PUT_FILES) {

files = proc->files;

if (files)

proc->files = NULL;

}

if (defer & BINDER_DEFERRED_FLUSH)

binder_deferred_flush(proc);

if (defer & BINDER_DEFERRED_RELEASE)

binder_deferred_release(proc); //[见小节4.6]

mutex_unlock(&binder_main_lock); //释放锁

preempt_enable_no_resched();

if (files)

put_files_struct(files);

} while (proc);

}

binder_deferred_release

此处proc是来自Bn端的binder_proc

static void binder_deferred_release(struct binder_proc *proc)

{

struct binder_transaction *t;

struct rb_node *n;

int threads, nodes, incoming_refs, outgoing_refs, buffers,

active_transactions, page_count;

hlist_del(&proc->proc_node); //删除proc_node节点

if (binder_context_mgr_node && binder_context_mgr_node->proc == proc) {

binder_context_mgr_node = NULL;

}

//释放binder_thread[见小节4.6.1]

threads = 0;

active_transactions = 0;

while ((n = rb_first(&proc->threads))) {

struct binder_thread *thread;

thread = rb_entry(n, struct binder_thread, rb_node);

threads++;

active_transactions += binder_free_thread(proc, thread);

}

//释放binder_node [见小节4.6.2]

nodes = 0;

incoming_refs = 0;

while ((n = rb_first(&proc->nodes))) {

struct binder_node *node;

node = rb_entry(n, struct binder_node, rb_node);

nodes++;

rb_erase(&node->rb_node, &proc->nodes);

incoming_refs = binder_node_release(node, incoming_refs);//key

}

//释放binder_ref [见小节4.6.3]

outgoing_refs = 0;

while ((n = rb_first(&proc->refs_by_desc))) {

struct binder_ref *ref;

ref = rb_entry(n, struct binder_ref, rb_node_desc);

outgoing_refs++;

binder_delete_ref(ref);

}

//释放binder_work [见小节4.6.4]

binder_release_work(&proc->todo);

binder_release_work(&proc->delivered_death);

buffers = 0;

while ((n = rb_first(&proc->allocated_buffers))) {

struct binder_buffer *buffer;

buffer = rb_entry(n, struct binder_buffer, rb_node);

t = buffer->transaction;

if (t) {

t->buffer = NULL;

buffer->transaction = NULL;

}

//释放binder_buf [见小节4.6.5]

binder_free_buf(proc, buffer);

buffers++;

}

binder_stats_deleted(BINDER_STAT_PROC);

page_count = 0;

if (proc->pages) {

int i;

for (i = 0; i < proc->buffer_size / PAGE_SIZE; i++) {

void *page_addr;

if (!proc->pages[i])

continue;

page_addr = proc->buffer + i * PAGE_SIZE;

unmap_kernel_range((unsigned long)page_addr, PAGE_SIZE);

__free_page(proc->pages[i]);

page_count++;

}

kfree(proc->pages);

vfree(proc->buffer);

}

put_task_struct(proc->tsk);

kfree(proc);

}

binder_deferred_release的主要工作有:

- binder_free_thread: proc->threads所有线程

- binder_send_failed_reply(send_reply, BR_DEAD_REPLY):将发起方线程的return_error值设置为BR_DEAD_REPLY,让其直接返回;

- binder_node_release: proc->nodes所有节点

- binder_release_work(&node->async_todo)

- node->refs的所有死亡回调

- binder_delete_ref: proc->refs_by_desc所有引用

- 清除引用

- binder_release_work: proc->todo, proc->delivered_death

- binder_send_failed_reply(t, BR_DEAD_REPLY)

- binder_free_buf: proc->allocated_buffers所有已分配buffer

- 释放已分配的buffer

- __free_page: proc->pages所有物理内存页

static int binder_node_release(struct binder_node *node, int refs)

{

struct binder_ref *ref;

int death = 0;

list_del_init(&node->work.entry);

//[见小节4.6.4]

binder_release_work(&node->async_todo);

if (hlist_empty(&node->refs)) {

kfree(node); //引用为空,则直接删除节点

binder_stats_deleted(BINDER_STAT_NODE);

return refs;

}

node->proc = NULL;

node->local_strong_refs = 0;

node->local_weak_refs = 0;

hlist_add_head(&node->dead_node, &binder_dead_nodes);

hlist_for_each_entry(ref, &node->refs, node_entry) {

refs++;

if (!ref->death)

continue;

death++;

if (list_empty(&ref->death->work.entry)) {

//添加BINDER_WORK_DEAD_BINDER事务到todo队列 [见小节5.1]

ref->death->work.type = BINDER_WORK_DEAD_BINDER;

list_add_tail(&ref->death->work.entry, &ref->proc->todo);

wake_up_interruptible(&ref->proc->wait);

}

}

return refs;

}

该方法会遍历该binder_node所有的binder_ref, 当存在binder死亡通知,则向相应的binder_ref 所在进程的todo队列添加BINDER_WORK_DEAD_BINDER事务并唤醒处于proc->wait的binder线程,执行binder_thread_read

处理死亡通知

binder_thread_read

static int binder_thread_read(struct binder_proc *proc,

struct binder_thread *thread,

binder_uintptr_t binder_buffer, size_t size,

binder_size_t *consumed, int non_block)

...

//唤醒等待中的binder线程

wait_event_freezable_exclusive(proc->wait, binder_has_proc_work(proc, thread));

binder_lock(__func__); //加锁

if (wait_for_proc_work)

proc->ready_threads--; //空闲的binder线程减1

thread->looper &= ~BINDER_LOOPER_STATE_WAITING;

while (1) {

uint32_t cmd;

struct binder_transaction_data tr;

struct binder_work *w;

struct binder_transaction *t = NULL;

//从todo队列拿出前面放入的binder_work, 此时type为BINDER_WORK_DEAD_BINDER

if (!list_empty(&thread->todo)) {

w = list_first_entry(&thread->todo, struct binder_work,

entry);

} else if (!list_empty(&proc->todo) && wait_for_proc_work) {

w = list_first_entry(&proc->todo, struct binder_work,

entry);

}

switch (w->type) {

case BINDER_WORK_DEAD_BINDER: {

struct binder_ref_death *death;

uint32_t cmd;

death = container_of(w, struct binder_ref_death, work);

if (w->type == BINDER_WORK_CLEAR_DEATH_NOTIFICATION)

...

else

cmd = BR_DEAD_BINDER; //进入此分支

put_user(cmd, (uint32_t __user *)ptr);//拷贝到用户空间[见小节5.2]

ptr += sizeof(uint32_t);

//此处的cookie是前面传递的BpBinder

put_user(death->cookie, (binder_uintptr_t __user *)ptr);

ptr += sizeof(binder_uintptr_t);

if (w->type == BINDER_WORK_CLEAR_DEATH_NOTIFICATION) {

...

} else

//把该work加入到delivered_death队列

list_move(&w->entry, &proc->delivered_death);

if (cmd == BR_DEAD_BINDER)

goto done;

} break;

}

}

...

return 0;

}

将命令BR_DEAD_BINDER写到用户空间,此时用户空间执行过程:

getAndExecuteCommand

status_t IPCThreadState::getAndExecuteCommand()

{

status_t result;

int32_t cmd;

result = talkWithDriver(); //该Binder Driver进行交互

if (result >= NO_ERROR) {

size_t IN = mIn.dataAvail();

if (IN < sizeof(int32_t)) return result;

cmd = mIn.readInt32(); //读取命令

pthread_mutex_lock(&mProcess->mThreadCountLock);

mProcess->mExecutingThreadsCount++;

pthread_mutex_unlock(&mProcess->mThreadCountLock);

result = executeCommand(cmd); //【见小节5.3】

pthread_mutex_lock(&mProcess->mThreadCountLock);

mProcess->mExecutingThreadsCount--;

pthread_cond_broadcast(&mProcess->mThreadCountDecrement);

pthread_mutex_unlock(&mProcess->mThreadCountLock);

set_sched_policy(mMyThreadId, SP_FOREGROUND);

}

return result;

}

executeCommand

status_t IPCThreadState::executeCommand(int32_t cmd)

{

BBinder* obj;

RefBase::weakref_type* refs;

status_t result = NO_ERROR;

switch ((uint32_t)cmd) {

case BR_DEAD_BINDER:

{

BpBinder *proxy = (BpBinder*)mIn.readPointer();

proxy->sendObituary(); //[见小节5.4]

mOut.writeInt32(BC_DEAD_BINDER_DONE);

mOut.writePointer((uintptr_t)proxy);

} break;

...

}

...

return result;

}

同一个bp端即便注册多次死亡通知,但只会发送一次死亡回调。

sendObituary

void BpBinder::sendObituary()

{

mAlive = 0;

if (mObitsSent) return;

mLock.lock();

Vector<Obituary>* obits = mObituaries;

if(obits != NULL) {

IPCThreadState* self = IPCThreadState::self();

//清空死亡通知[见小节6.2]

self->clearDeathNotification(mHandle, this);

self->flushCommands();

mObituaries = NULL;

}

mObitsSent = 1;

mLock.unlock();

if (obits != NULL) {

const size_t N = obits->size();

for (size_t i=0; i<N; i++) {

//发送死亡通知 [见小节5.5]

reportOneDeath(obits->itemAt(i));

}

delete obits;

}

}

reportOneDeath

void BpBinder::reportOneDeath(const Obituary& obit)

{

//将弱引用提升到sp

sp<DeathRecipient> recipient = obit.recipient.promote();

if (recipient == NULL) return;

//回调死亡通知的方法

recipient->binderDied(this);

}

本文开头的实例传递的是AppDeathRecipient,那么回调如下方法。

private final class AppDeathRecipient implements IBinder.DeathRecipient {

...

public void binderDied() {

synchronized(ActivityManagerService.this) {

appDiedLocked(mApp, mPid, mAppThread, true);

}

}

}

结论

对于Binder IPC进程都会打开/dev/binder文件,当进程异常退出时,Binder驱动会保证释放将要退出的进程中没有正常关闭的/dev/binder文件,实现机制是binder驱动通过调用/dev/binder文件所对应的release回调函数,执行清理工作,并且检查BBinder是否有注册死亡通知,当发现存在死亡通知时,那么就向其对应的BpBinder端发送死亡通知消息。

死亡回调DeathRecipient只有Bp才能正确使用,因为DeathRecipient用于监控Bn端挂掉的情况, 如果Bn建立跟自己的死亡通知,自己进程都挂了,也就无法通知。

每个BpBinder都有一个记录DeathRecipient列表的对象DeathRecipientList。